One of the most exciting technical trends of this generation has been that of virtualization. Organizations across the world have begun to embrace virtualization as an alternative to maintaining hardware-intensive legacy networks. This move has been driven by the massive increase in resource-intensive applications and gargantuan storage resource requirements. All of this data needs to be maintained and supported one way or another.

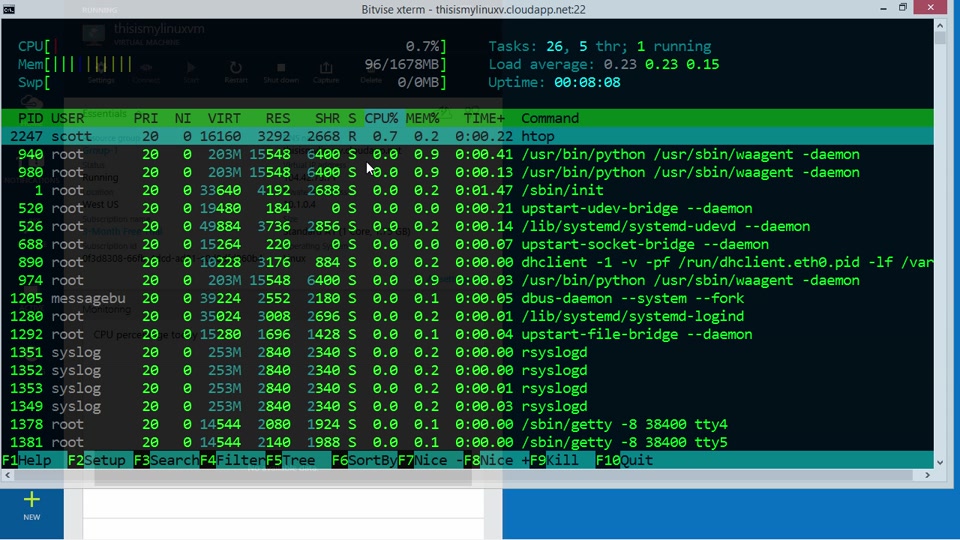

With virtualization, you provide end-user devices that map onto central server resource. Although the terminal seems to be using local CPU and storage capacity, in fact, those facilities operate remotely. The endpoint is termed a “virtual machine” and it can be hosted on a fully functioning desktop computer, or implemented with dumb terminals that have no more processing abilities than a bootup process that contacts the network.

The “thin client” model reduces the cost of desktop equipment and also centralizes all hardware in the IT department. This reduces the number of onsite visits needed by support staff because, with fewer working parts, thin clients have less likelihood of failure. The virtualization scenario puts more pressure on the network however, because, with no software or storage held close to the user, permanently available network services are vital in order to provide users with computing power.

The ability of virtualization to depict remote resources as being provisioned locally has become more widely implemented recently, thanks to two developments: Cloud services and mobile devices. Online access to “software as a service” and the low cost of disk space offered by remote providers make these off-site resources economically viable.

The scalability of subscription service charged by usage, with no overhead costs for expansion also makes the management of IT services a great deal easier. Mobile devices release consultants and field operatives from the need to return to the office in order to perform administrative tasks. However, while these small devices get workers out on site site and constantly productive, they have little room for storage, so centralizing software and disks on a server is the only way to make these devices viable for business functions.

It has become clear in recent years that traditional storage solutions and servers aren’t holding up very well. Storage resources are commonly swamped with data to the point of poor performance, and by the time a department purchases new hardware, it is out of date within a couple of years.

Virtualization has been providing many companies with a way to navigate around the problem of poor performance and to deploy virtual machines to increase the capability of an organization’s infrastructure. Hardware and software virtualization have been helping many organizations to increase the scalability of their equipment, boost efficiency, and reduce costs. In this article we look at the fundamentals of virtualization.

What is Virtualization?

For some time, physical hardware has raised a number of problems for organizations around the world. Building an enterprise grade network has been inseparable from purchasing expensive equipment. Enterprises had to take into account the amount of physical space they had to accommodate new hardware as much as the costs involved. This also applies to software as well.

Virtualization turns hardware, software, storage, desktops and networks into virtualized environments which can be interacted with on a remote basis. In practice, the term virtualization is generally used to refer to virtual machines. Virtual machines are computer files that act as if they were physical computers.

They allow a company to deploy additional machines without the need to front the overhead costs and maintenance of new physical hardware. There are many different types of virtualized systems and levels of virtualized infrastructure. These include; full, partial, paravirtualized, virtual machines, storage, desktop, and networks. Below we look at a few of the different levels of virtualization that are available to an organization.

Levels of Virtualization

There are 19 ways IT infrastructure can be virtualized. These vary in technique and scale depending on their application. We’re going to run through some of the most well-known types of virtualization before delving deeper into hardware, virtual machine, storage and desktop virtualization.

Below are some of the most widely known types of virtualization in use:

Hardware Virtualization – Using physical hardware on a virtual basis. This can be separated into three levels of hardware virtualization referred to as full, partial and paravirtualization.

Full – The Virtual Machine Monitor takes care of all interactions between the operating system and hardware. The operating system is thus separate from the hardware layer.

Partial – Many hardware vendors have designed products to work alongside virtualized systems to increase overall performance. AMD-V and Intel Virtualization Technology fall into this category.

Paravirtualized– The operating system has awareness that it has been virtualized. Generally, this is in Linux environments where physical instructions add instructions that cannot be virtualized.

Virtual Machine – A virtual machine is a virtualized version of a physical computer that interacts with programs and applications like a physical device. This is a type of software virtualization that can be applied to virtual appliances, applications, and operating systems as well.

Storage Virtualization – Storage virtualization is used to segregate physical and virtual storage solutions.

Desktop Virtualization – Desktop Virtualization gives users the ability to operate a remote desktop environment from their devices.

Network Virtualization – Network Virtualization creates a unique space that stands as part of a larger network.

Virtual Machine

One of the most common types of virtualization that you encounter is that of virtual machines. Virtual machines are computer files which act as if they are a real computer. In essence, these are virtual computers that act the same as if they were a physical computer connected to the network. As such, it can interact with applications and appliances depending on what the user needs. The virtual machine is locked out from the rest of the system so that the software can’t be edited without permission.

It is important to note that you can run more than one virtual machine on one computer. On a server, running multiple virtual machines is achieved with a hypervisor, which acts as a centralized management solution. These are particularly useful as they allow an enterprise to take onboard new machines without having to pay for the cost of a physical machine.

That being said, they also need to take in account the virtual resources needed to sustain such a computer. While this is allocated between multiple storage solutions it still needs to be considered before making the transition.

Hardware Virtualization

The solution has been to virtualize hardware. In order to achieve the virtualization of hardware large organizations have been using a hypervisor, which is a Virtual Machine Monitor. The Virtual Machine Monitor shares the physical resources of your hardware and converts your physical resources into abstract resources. This creates a virtual environment where resources can be allocated as if they were a part of a physical piece of hardware.

Virtualization has become an important trend because it allows companies to move beyond the limitations of their physical equipment. Virtual resources are scalable and can allocate resources much more efficiently than a hardware-based environment.

The main reason why organizations opt to use hardware virtualization is that it allows the user to share physical resources between virtual machines. As a consequence, this means that there are lower costs as you can operate multiple operating systems through one pane of glass.

Servers in particular benefit because it greatly reduces the amount of resources that are needed to sustain enterprise-grade performance. It also has the advantage of standing as a backup solution which enables you to migrate virtual machines in the event that there is a problem.

Storage Virtualization

Storage virtualization provides the user with a perspective of their network storage that obscures physical infrastructure. In a nutshell, storage virtualization makes it appear to the user like remote storage solutions are connected directly to the network. As a consequence, multiple storage devices can be combined together to act as one large storage device.

This has the advantage of allowing the user to allocate space to applications and processes as it is needed. It also helps improve the performance of storage as usage can be spread amongst multiple storage devices. This means that the strain that would be placed under a traditional storage device is eliminated.

In addition, storage virtualization also provides a lot of value in terms of recovery. On a traditional network, if a storage device fails then there is a good chance that you’re going to lose that data for good. However, by virtualizing your storage, data can be stored on multiple devices at once which minimizes the risk of data being lost in the event of a failure.

Desktop Virtualization

With desktop virtualization users can access their computer operating systems and applications through a virtual machine. Desktop virtualization uses a hypervisor which provides the user with a platform through which to manage and deploy additional virtual machines. This is desktop virtualization operating at a server level rather than a desktop system like Microsoft Virtual PC or VMware Fusion for example.

The rationale behind desktop virtualization is that desktop computers are some of the most resource-intensive items on a network. A substantial amount of resources are needed to maintain the number of devices needed to sustain an enterprise scale network. Virtualizing desktops helps to reduce the amount of resource commitment needed.

It also has the advantage of minimizing the points of vulnerability. Every desktop within a network is a point of access that can be attacked by cyber hackers. Running desktops virtually on a server allows you to manage all these desktops from one secure location. You can also manage any upgrades from this position as well, reducing the amount of administration that you need to do.

However, desktop virtualization is not without its problems. While you reduce the costs associated with maintaining physical hardware you gain new concerns in the form of requiring more powerful servers and greater network bandwidth. This isn’t the end of the world, but it is something to consider with regards to desktop virtualization.

Who are the Main Providers of Virtualized Solutions?

VMware

VMware is a name that keeps cropping up within the world of virtualized solutions and for good reason. It produces some of the best hypervisors in the world with VMware ESX (Elastic Sky X), and ESXi (Elastic Sky x Integrated). ESX is a server virtualization platform which can be managed through one service console based on Linux. Similarly, ESXi operates the same but without the service console which results in more lean architecture and use of a Direct Console User Interface to manage the ESXi server.

Microsoft Hyper-V

No less impressive than VMware’s products is Microsoft Hyper-V. While it doesn’t have the state of the art chops of a VMware product, its integration with Windows and its reliability make it a formidable opponent. Hyper-V allows you to create up to 14 virtual machines and enjoy 8 terabytes of storage a month. It is worth noting that for a Windows product the user interface of the centralized console is very modern and up to date.

Citrix XenServer

While Citrix XenServer doesn’t have the name recognition of many of its competitors, it has consistently produced formidable hypervisors in the past. Citrix Xen Server 7 has been positioned as an alternative to VMwares vSphere offering NVIDIA GRID GPU, and vGPU pass-through deployments for both Windows and Linux. It also allows organizations to consolidate and contain a variety of different servers.

When Should I Virtualize?

No less important than knowing whether you want to virtualize is knowing when you should virtualize. After all, although virtualization does bring about a number of benefits, it is not for all organizations. Generally, the question of when you should virtualize can be answered by the level of technology you use, the size of your staff, budget, and organizational requirements. As a basic rule, if your company needs technology in order to be able to operate, then virtualization is a possibility.

For example, if you are maintaining computers, laptops, storage, and servers then you have the basic infrastructure in place to make virtualization a worthwhile pursuit. The next step is to think about the number of employees you have. Virtualization is only worth consideration if you employ more than 20 employees. Any less and you might as well use traditional servers to complete future operations.

Another important factor to consider is whether you can actually afford to virtualize. Make no mistake, virtualization will reduce the amount of money spent on costly hardware and make upscaling easier in future, but it comes at a substantial short-term cost. Smaller businesses may struggle to pay for virtualization in the initial stages, so it is important to be very confidence in the ROI you’re going to receive.

Ultimately, you’re running a balancing act between that point where your needs are growing and you need to virtualize in order to perform at your best. It is far better to wait for your teams needs to grow and then virtualize than to make the decision to transition prematurely and lose money.

Are Virtualized Systems Secure?

Whenever a new piece of technology is adopted into an enterprise environment, there is always a number of challenges that are raised. Virtualized systems are no exception. One of the biggest challenges raised by virtualization is complexity. When managing a traditional network there are a wide range of common practices used to screen for security issues.

Network monitoring, antivirus platforms, and firewalls act as three methods of safeguarding against security threats under a classic system. However, by deploying virtualized infrastructure an administrator’s job becomes much more complex. It is difficult to screen for vulnerabilities and indicators of malicious software or attacks.

This is compounded by the fact that virtualized infrastructure is in a constant state of motion. Monitoring a network effectively is as much dependent on your familiarity with your systems as the tools you possess. Virtual machines can go on and offline at a staggering rate.

The term ‘virtual sprawl’ is used to describe when there are too many virtual machines to be managed. Virtual sprawl is a massive security risk because you cannot guarantee the integrity of a system you can’t manage. If you can’t monitor a system properly then you can’t ensure its security.

Another particularly problematic issue is that workloads can be moved to less secure virtual machines regularly. For example, if you have a very important workload that is situated on a virtual machine with high security, it can be moved as soon as the system is in need of more resources.

Managing the Risks of Virtualized Systems

Even though virtualized systems raise a number of security concerns, they can be addressed with clear protocols in place. At the heart of addressing cybersecurity concerns is the practice of segmentation. Segmentation means that access to network resources is restricted to certain applications and users. This controls access to these resources and makes sure they don’t migrate to a less secure virtual machine.

You can also segment secure layers of your network harbouring particularly private details such as payment information and credit card details. This can be achieved through the use of software-defined networking which will allow an administrator to define several secure layers on the network.

Virtualization isn’t a Guaranteed Fix

There are many trends that come and go in IT infrastructure, but virtualization is here to stay. As organizations balloon to unforeseen heights it has become essential to consider the possibility of using virtual machines and hypervisors to extend the scale and reliability of everyday applications and devices.

The costs of managing a large amount of hardware are simply too high. It makes little sense to pay a fortune for expensive hardware that is going to consume network resources and restrict the scalability of an organization. Virtualization provides a welcome alternative that allows organizations to move beyond the limitations of physical infrastructure.

If machines are needed, they can be created. If an appliance needs an extraordinary amount of storage, it can be allocated from multiple storage resources. In the event that a system fault occurs, storage is diversified enough to make sure that your service doesn’t lose its data completely.

Overall, any organization that is growing in size and considering purchasing new infrastructure should look into the possibility of virtualizing some of their infrastructure. While this isn’t a fix-all solution it has well-established benefits. Of course, if you want to deploy virtualization in your organization you should make sure that you have an expert on hand to maintain monitoring your solution.

If there is one potential pitfall to avoid, it is allocating a bunch of virtual machines that you have little to no control over. So, while virtualization isn’t a guaranteed fix, as long as you maintain a clear perspective of your virtual infrastructure, you have a much better chance of deploying virtualized solutions without any hiccups.